Blooms Taxonomy in the Next Generation Science Standards (NGSS)

Posted by Andrew Gardner on

In this final installment in a 3-part series, BrainPOP’s Assessment Specialist, Kevin Miklasz, shares his analysis of the Common Core and Next Generation Science Standards in relation to the levels of Bloom’s taxonomy.

Inspired by Dan Meyer’s analysis, I wrote two previous posts on the Common Core Math and Common Core ELA standards. Both broke down the standards on a task by task basis, and coded each task according to Bloom’s taxonomy. In this part 3, we’re going to turn to the NGSS and see how it ranks up.

Next Generation Science Standards

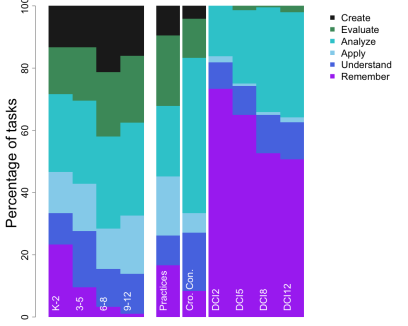

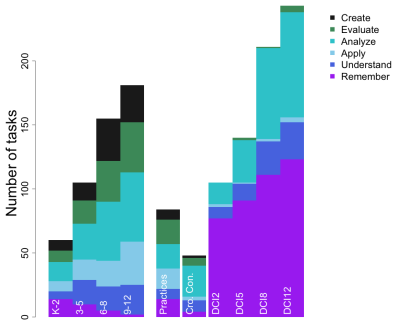

In addition to coding the specific NGSS grade bands (which are shown on the left of both graphs), I also decided to code the framework itself (the right side of both graphs). The Framework was comprehensive enough to be able to separately code the tasks in each of the Framework’s three components: 1) the Science and Engineering Practices, 2) the Crosscutting Concepts, and 3) the Disciplinary Core Ideas (I did break up the DCI by grades). Of course, the components are not expected to be taught in isolation even though I am coding them this way, but it is interesting to note differences in each component. The top graph shows the number of tasks, and the bottom shows the same info by percentages.

First, general patterns: Unsurprisingly, the total number of tasks goes up with grade. Second, there is a slight trend towards higher level skills at higher grades (though it’s not nearly as strong as the ELA standards[link]). Third, the Framework’s components are very similar to what might be expected. The DCIs are unsurprisingly focused mostly on remember tasks. The Practices, on the other hand, emphasize a fair proportion of tasks across the spectrum. The Crosscutting Concepts most emphasize analyze tasks.

The most interesting patterns involve the gradebands, and their comparison to the Framework. The gradebands show a clear preference for higher level skills, for all grades. Create tasks start as early as kindergarten, and persist through high school. The main pattern across grades is that Remember tasks (primarily making observations) is strongest in kindergarten and fades in later grades. The most striking feature of the gradebands is that they most closely resemble the Science and Engineering Practices in their distribution of tasks. In other words, the Framework claims that the Practices are suppose to underlie all of the gradeband standards, and that seems to hold out surprisingly well. This is an especially interesting comparison to the CC Mathematical Practices, whose distribution of tasks [link] carried little resemblance to the gradebands themselves. The traditional conception of science as a collection of facts is shown in the DCI’s, but does not reflect itself in the gradebands.

The NGSS are certainly setting a high bar for what they expect from students in terms of science performance in school, and according to this analysis the bar sits at a higher level of Bloom’s taxonomy than either Common Core standard.

That’s it, part 3 of 3! Please, let me know your thoughts in the comments below.

Notes on Methodology

In case you are curious how I did this analysis, here some details.

Some grade-specific standards contained multiple parts, or multiple tasks that they required from students. I coded each “task” required from students, which usually was one per standard, but sometimes was multiple tasks per standard.

For the gradebands, I coded the “performance expectations,” or the things that followed “Students who demonstrate understanding can:”

For the Framework, I had to be a little more creative. For the Science and Engineering Practices, each practice has a section entitled “Goals” that starts with the phrase “By grade 12, students should be able to” and had a series of bullet points. I coded each of those bullet points. For the Crosscutting Concepts, I coded the short description of each concept on page 84 of the Framework. There was a section labelled “Progression” which described how the treatment of the concepts would change over time, but it was hard to code a clear number of tasks from that description. This led to a small number of total tasks, but a reliable relative percentage of tasks. For the Disciplinary Core Ideas, I coded the “Grade Band Endpoints,” which were subdivided into 4 categories for different grade level. This again produced a somewhat artificially high number of tasks, as each sentence from a several paragraph description was coded separately, but the relative proportions are reliable.

Each gradeband started with a verb that was relatively clearly related to some level of blooms, and allow for easy, direct coding. The only sequence that I had to interpret a bit was “Developing a model to…” This most resembled a create task, especially when the thing being modeled is sufficiently complex and there are multiple potential ways to model it. But when the model was relatively simple and defined (like the water cycle), this felt much more like an analyze task- just finding and sorting all the obvious and finite pieces, rather than creating or synthesizing something new and novel from a large and complex assemblage of parts. I used my best judgement to distinguish between Analyze and Create for that verb sequence.